Running DeepSeek r1 Locally

Run DeepSeek Locally with Docker-Compose online and offline

Run DeepSeek Locally with Docker-Compose

Running DeepSeek locally with Docker-Compose is possible with a Mac, though a lighter-weight implementation of the model is recommended.

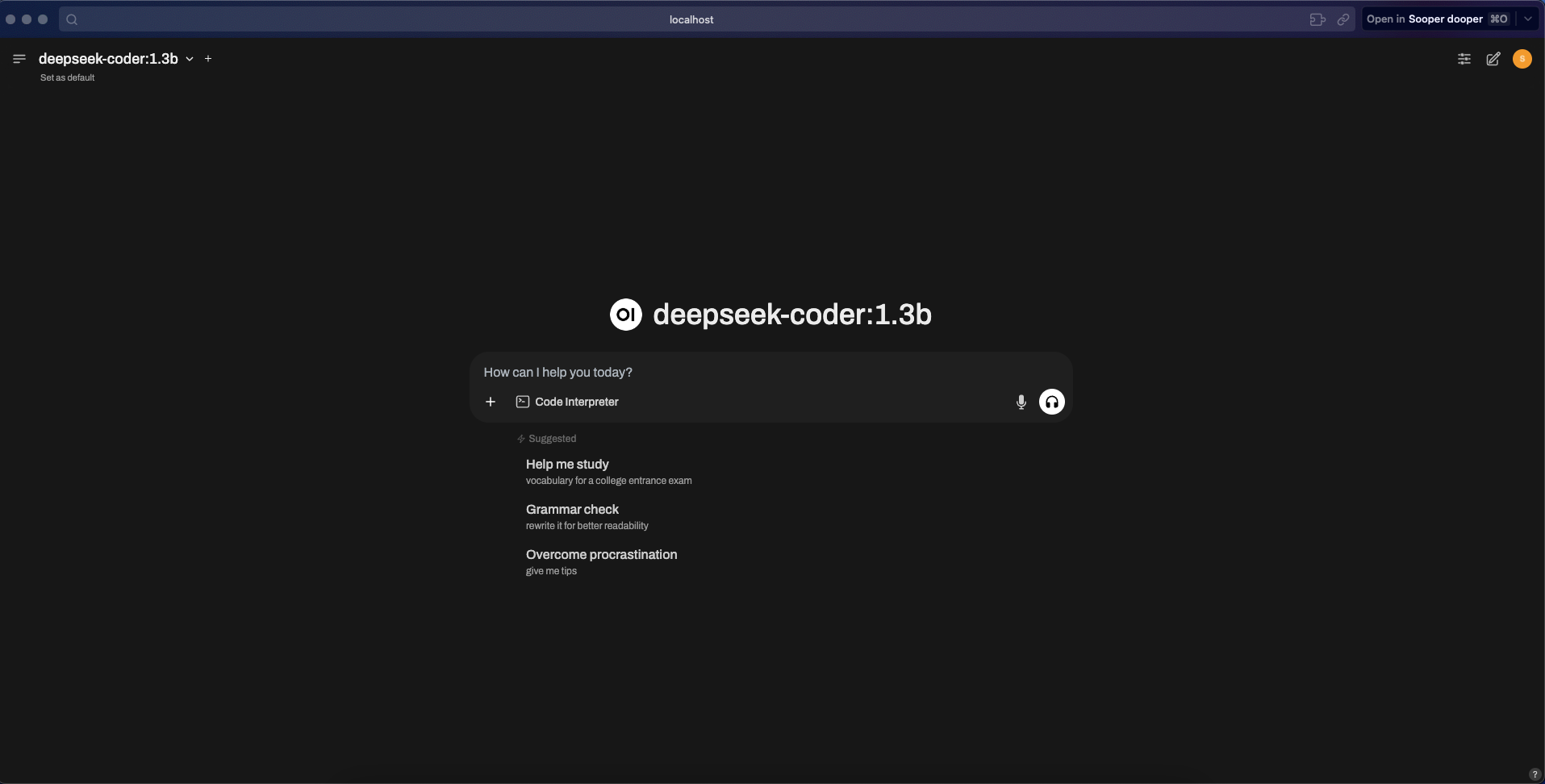

This will take you through how to run DeepSeek on localhost with a web-ui interface.

Code found here: https://github.com/AngelLozan/Local_Deep_Seek

Run with web interface

These steps require internet connection

Install Ollama

Pick a model based on your hardware: ``` ollama pull deepseek-r1:8b # Fast, lightweight

ollama pull deepseek-r1:14b # Balanced performance

ollama pull deepseek-r1:32b # Heavy processing

ollama pull deepseek-r1:70b # Max reasoning, slowest

ollama pull deepseek-coder:1.3b # Code completion assist

1

3. Test the model locally via the terminal

ollama run deepseek-r1:8b ```

Install Docker

Install Docker-Compose

Create Docker-Compose file as seen in this repo. If you wish to use an internet connect, you can simply uncomment the image for the open-webui service and remove the build.

Open the docker app and run

docker-compose up --buildVisit

http://localhost:3000to see your chat.

Run with VScode (offline):

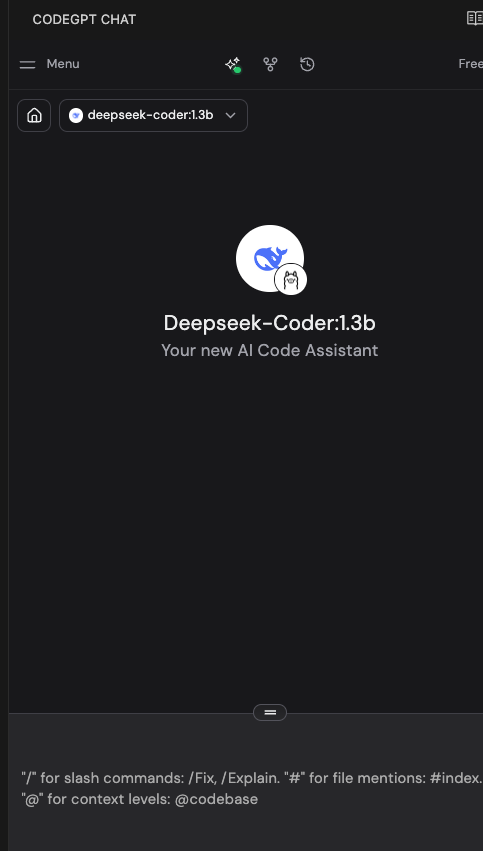

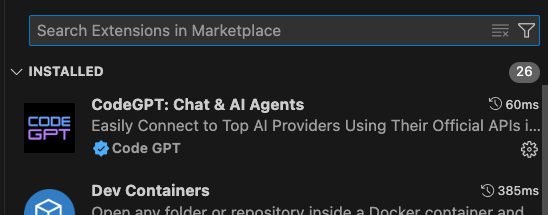

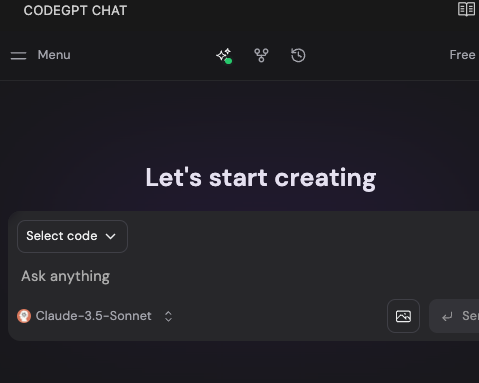

Follow steps 1-2 in the Steps to run with a web interface, then you can also install the CodeGPT for VScode extension.

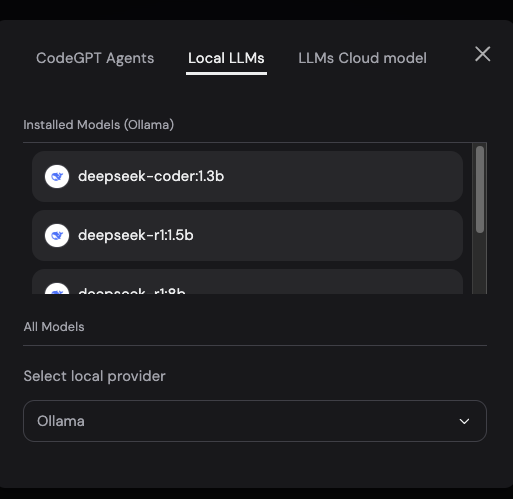

Navigate to the Local LLMs section. This is likely accessed from the initial model selection drop down (pictured with claude selected).

From the available options, select ‘Ollama’ as the local LLM provider.

You can now turn off internet and using Local LLMs, continue to chat/analyze code.

Running Open-webui locally without internet

Follow steps 1-2 in the Steps to run with a web interface

Install

uvcurl -LsSf https://astral.sh/uv/install.sh | shCreate uv env:

mkdir ~/< project root >/< your directory name> && uv venv --python 3.11Install open-webui:

cd ~/< project root >/< your directory name > && uv pip install open-webuiStart open-webui:

DATA_DIR=~/.open-webui uv run open-webui serveVisit localhost and start chatting!

Running locally via docker without internet with Open-webui

Follow steps 1-6 in the steps to run with a web interface

Next, follow steps 1-4 in the steps to running open-webui locally without internet

Once this is done, create a

Dockerfilein your chosen directory where the open-webui deps live like that seen in this project, to mimic the setup and install in the docker container of all dependencies for open-webui.Next, start the app:

docker-compose up --build. If you do not wish to see logs:docker-compose up --build -dVisit localhost and start chatting!

If models are not available to select, turn on your internet temporarily, go back to the terminal and run

docker exec -it ollama bashDownload the model to your service using the

ollama pullcommands seen earlier in step 1.Verify models are installed with

ollama listwhile still in the cli. If so, you can turn off internet again and exit the cli withctrl + dorexitRestart your

open-webuicontainer withdocker-compose restart open-webui

Troubleshooting

Inspect the network:

docker network lsthendocker network inspect < network >Inspect Ollama and models:

curl http://localhost:11434/api/tags

- or -

docker exec -it ollama ollama list

Restart open-webui container:

docker-compose restart open-webuiDepending on your hardware, running

docker-compose downthendocker-compose up -dto restart built containers can take a moment. Check progress withdocker logs < service name >